Summary

- The future of chatbots is shifting from simple scripted replies to grounded, action-oriented systems that can understand context, complete tasks, and support real customer service workflows.

- Businesses are investing in trends like agentic AI, multilingual support, voice interfaces, and IoT-connected experiences, but long-term success depends on accuracy, clear scope, and strong human handoff options.

- Advanced chatbots can reduce support workload, improve customer experience, surface useful insights, and scale more easily across channels when they are built around trusted content and practical use cases.

- The biggest risks still come from confident wrong answers, weak escalation paths, outdated information, unnecessary data collection, and fragile integrations that make bots unreliable over time.

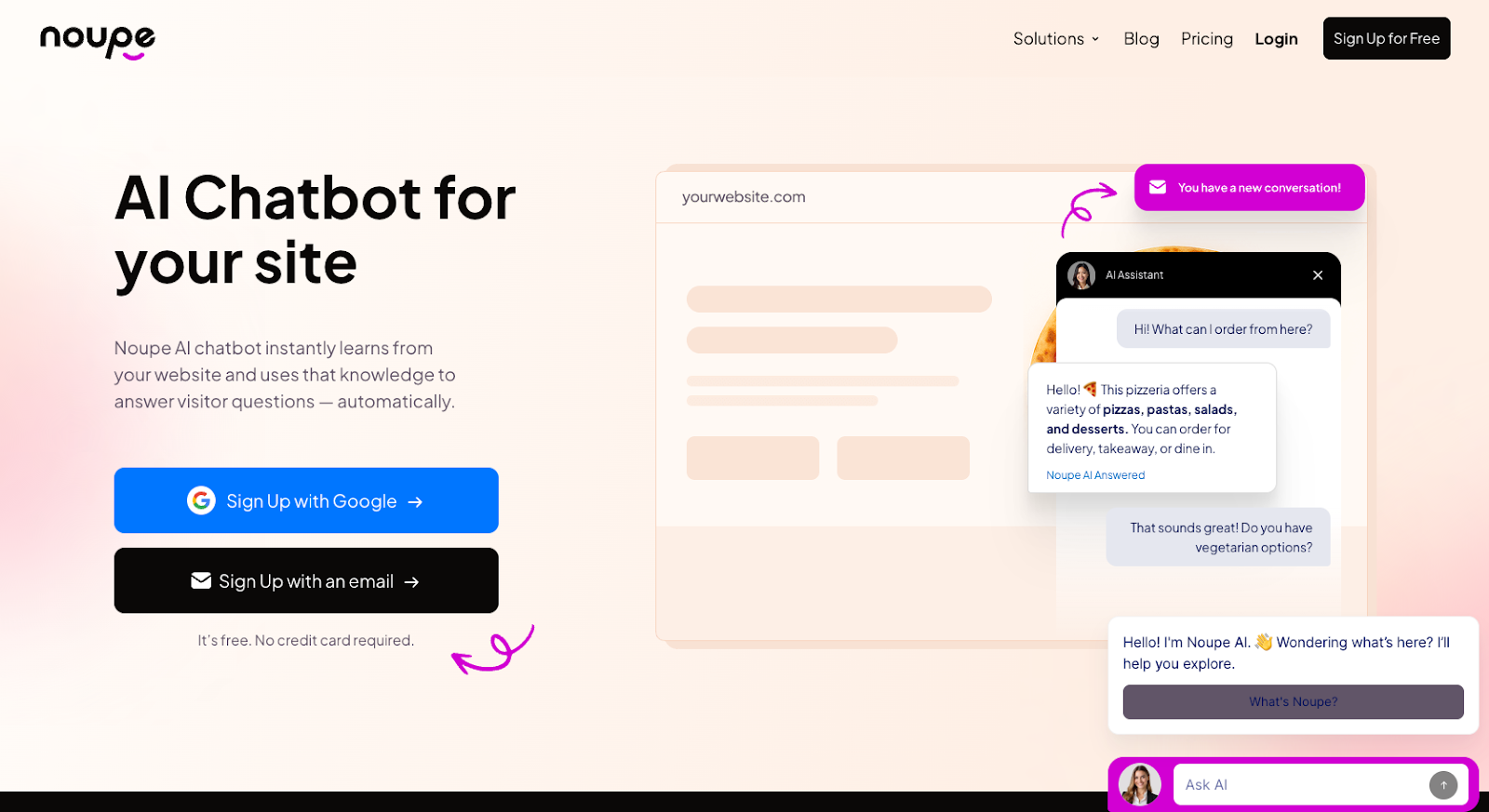

- Tools like Noupe help companies launch faster by turning existing website content and custom knowledge into AI-powered support, making it easier to keep chatbots accurate, useful, and aligned with rising customer expectations.

The future of chatbots keeps coming up because teams are actively wrestling with it. People are planning around smarter chatbot development, things like agent-style automation and better language understanding, and not just because it sounds cool. After years of clunky bots, it finally feels like some of this might actually work.

What’s changed is that today’s systems can actually follow context, take action, and handle real support work without falling apart. That said, there’s still a mismatch between expectations and reality.

Analysts expect the chatbot market to cross $27 billion by 2030, and almost every company you interact with is testing some form of AI chatbot development. Adoption is high. Trust is still catching up. Studies suggest about 53% of customers actively dislike seeing AI in customer service.

For companies, this is a signal that if AI chatbot technology is going to pay off in the years ahead, following the trends isn’t enough. What really matters is creating a bot that can fix the parts of customer experience that are still broken.

How did we get here? The evolution of AI chatbot technology

Most people aren’t asking “What is a chatbot” anymore, but they probably should be. The definition has changed. Early chatbots started popping up around 1966, after Joseph Weizenbaum created a simple software system (ELIZA). The bot could recognize keywords, and generate responses, which inspired the first chatbot generation.

That generation was built on rule-based logic and decision trees. Bots followed scripts, waited for exact phrasing, and collapsed the second someone veered off track. That era taught teams an important lesson the hard way: customers don’t talk like flowcharts. They type fragments. They change their mind mid-sentence. They paste screenshots and expect you to keep up.

The 21st century introduced a new avenue for chatbot development, with the rise of AI. Natural language processing opened the door for intent detection and confidence scores.

Bots got better at guessing what you meant, yet they still broke whenever policies changed or content drifted. Most teams learned that maintaining a bot was harder than launching one.

When generative models entered the picture, the chatbot future suddenly felt almost unreal. Conversations stopped sounding robotic. Answers came back fast and fluid. For a while, it looked like bots had finally caught up to how people actually talk. Then reality caught up. Hallucinations showed up quickly. Bots answered with confidence even when the information wasn’t grounded in anything real. That’s when trust started to crack.

A lot of companies quietly pulled features back in 2024 and 2025 once they saw how hard it was to recover from a single bad answer.

What’s driving the future of chatbots now isn’t clever phrasing or bigger models. It’s knowing when not to guess. Modern AI chatbot technology stays close to approved sources, pulls answers from real content, and avoids filling in the blanks. That discipline matters more as bots handle longer conversations, accept different types of input, and start taking actions instead of just talking.

The trends influencing the future of chatbots

When people talk about the future of chatbots, they usually want a neat list. New features. New tools. Some sign that everything’s finally coming together.

What’s really happening feels messier.

You can see it if you watch how teams behave instead of what they publish. There’s a lot less chest-thumping about “smart bots” and a lot more quiet cleanup work. Fewer grand launches. And more small fixes. More “let’s limit what this thing can touch before it breaks something important.”

That change in mindset explains why the chatbot future in 2026 doesn’t look much like it did even a couple of years ago. Companies didn’t suddenly get better models. They got tired of bots that talked confidently and did nothing useful, or did the wrong thing.

If you’ve ever rolled out a bot that technically worked but still made support harder, you already know the feeling. Conversations loop. Customers rephrase the same question three times. Someone eventually jumps in and apologizes. The bot stays live anyway.

So when you hear about new chatbot trends, read them through that lens. Not “what’s possible,” but “what teams are finally willing to trust.” That’s how you create a chatbot that pays off.

1. Agentic AI integration: Bots that do things

The most obvious change in the future of chatbots is simple: talking isn’t enough anymore.

Teams want bots that finish a task and get out of the way.

That’s why you’re hearing more about agents instead of chatbots. A chatbot can answer, an agent can book an appointment, update a ticket, or collect the missing details from a conversation and pass them along cleanly. They’re more like team members than simple machines.

About 23% of companies were already scaling agentic AI initiatives in 2025, and Gartner suggests agentic systems will be autonomously resolving 80% of customer service issues by 2029.

That’s where everything is heading, towards bots that can retain context, make decisions, and handle increasingly complicated workflows without human intervention.

Still, agentic AI adding to chatbot use cases raises the stakes. When a bot can take action, mistakes cost real money and real trust. That’s why smart AI chatbot development in 2026 looks restrained. Narrow permissions, clear stop points and fast handoffs matter.

If you’re thinking about this direction, it helps to look at how bots behave across channels instead of as a widget. That’s where omnichannel AI chatbot setups and AI chatbot call center patterns come into play. The bot doesn’t live on a page; it lives in a workflow.

2. Personalization that actually helps

Personalization used to stop at “Hi, Sarah.” Nobody bought it.

The version that matters in the chatbot future is practical: the bot remembers what you just asked, understands what page you’re on, and doesn’t make you repeat yourself when you escalate. That’s it. That alone feels like relief.

Capgemini’s 2025 research makes the same point from the agent side: 73% of agents said gen AI reduced time spent on mundane tasks, and 70% said it reduced overall workload. That only happens when the bot collects the right info up front and keeps the thread clean.

Good personalization doesn’t have to mean personalizing everything. It should just mean using data to simplify things when it makes sense, like when a customer needs a bot to remember the last problem they had, or suggest a relevant product.

It can also include making sure your bot actually sounds like your business consistently. Generic bots don’t create “personalized” experience. When you can adjust the tone to match your company, and the situation, the conversation feels more relevant.

3. Multilingual chat is becoming the default

Your business and website already has a multilingual audience. Even if you don’t market globally. Even if your support team is one timezone and one language.

So the future of chatbots includes multilingual capability by necessity, not ambition. It’s not just translation either, it’s policy accuracy across languages, consistent answers and the bot refusing to guess when the source content doesn’t cover something.

Multilingual capabilities are particularly important in certain industries. Healthcare, travel, hospitality, and retail companies all span countries naturally. The key to making sure your chatbot doesn’t break between geographical borders is ensuring that the underlying system can truly understand the different nuances in different languages, and adapt quickly.

4. Voice is creeping back into the future of chatbots

The chatbot vs voicebot debate is starting to disappear, thanks to multimodal systems that can now understand both written and spoken speech.

Here’s the thing people miss when they talk about voice: you don’t “add voice” because it’s cool. You add it because the customer is already speaking. Call centers don’t disappear in the chatbot future. They get layered with automation that handles the repetitive chunks.

Vodafone’s TOBi is the clean example most teams point to because it’s bluntly operational: it resolves a large share of digital inquiries without escalation and trims time off calls. Less waiting, fewer transfers, fewer “can you repeat that?” loops. That’s what good AI chatbot technology looks like when it touches voice.

For most companies the path forward will be simple. Start with text, then add voice where it saves real time (IVR, call deflection, after-hours triage).

5. IoT + chat: The “interface” trend

This trend blends with the one above. More companies are using voice assistants built into household appliance, wearables, and cars these days. Companies are adapting with chatbots that can bridge the gap between IoT devices and users.

The best IoT and chatbot ideas are usually simple. You connect systems to smart tools to fix everyday problems faster. If a system goes down, it pings a chatbot, and that bot enacts a fix. We’ve seen this already in office environments where AI tools can adjust the temperature in rooms, book spaces, and even order extra equipment when supplies run low.

With real-time sensor updates, bots can do a lot of back-end work proactively, without waiting for a person to get in touch. That reshapes the whole future of customer service.

Why advanced chatbots are worth it

When a bot is scoped properly, the payoff shows up fast. Fewer “where’s my order?” messages. Fewer password resets. Fewer agents spending Monday morning pasting the same refund policy over and over. That kind of relief is what keeps teams invested in chatbot development after the novelty fades. What companies usually see looks like this:

- Lighter workloads for human teams: When bots handle high-volume questions, people get breathing room. Support queues shrink, costs drop, and sudden spikes don’t feel as painful.

- Better customer experiences: Bots respond instantly, around the clock, in the customer’s language. No waiting. No timezone math. When it works, support simply feels easier.

- Clearer insights into real problems: Advanced bots surface patterns in conversations and pass useful context to agents. You start seeing where customers get stuck instead of guessing.

- Simpler growth: Thanks to straightforward chatbot builders and tools that can extract knowledge from existing systems and websites, designing and scaling chatbots for different use cases becomes a lot easier.

- Higher sales: With chatbots leveraging user data to customize interactions, companies can benefit from higher conversion rates, and increased loyalty. That leads to better revenue outcomes over time.

All of those benefits are why it’s getting much easier to justify chatbot pricing these days. The ROI shows up in layers.

How the future of chatbots can go wrong

Even with all the progress in chatbot trends, most problems still show up in the same places. Not because teams don’t care, but because small decisions compound fast once a bot is live.

The patterns are familiar.

- Confident wrong answers: Modern AI chatbot technology doesn’t throw errors when it’s unsure. It fills the gap. That works until a user notices the answer doesn’t line up with reality. One bad answer delivered confidently is often enough to kill trust.

- Unclear scope and weak escalation: Bots get into trouble when they’re asked to handle everything. Refund disputes, cancellations, complaints, emotional edge cases. Without hard boundaries, the bot either stalls or improvises. Writing down exactly what the bot handles, and what it hands off immediately, prevents most of this.

- Missing maintenance: Chatbots rarely fail on launch. They fail quietly weeks later. Prices change. Policies shift. Products evolve. The bot keeps answering like nothing happened. Without regular review and chatbot testing, outdated answers pile up and trust erodes without warning.

- Over-collection of data: Asking for personal information “just in case” creates risk with no payoff. Users notice when a bot asks for more than it needs. Keeping questions minimal, explaining why information is requested, and making the human exit obvious goes a long way toward preserving trust.

- Fragile integrations: Even a well-trained bot struggles when it’s tied into messy systems. If it can’t access the same data your team relies on, answers drift out of sync. Users notice right away, even if they can’t explain why.

In the long run, the future of chatbots favors teams that treat these risks as part of the design work, not problems to deal with later.

How Noupe helps companies build chatbots that stay useful

As the future of chatbots matures, the gap isn’t between “smart” and “not smart.” It’s between bots that stay accurate and bots that gradually rot. Noupe lines up with how chatbot development actually works now: fast setup, grounded answers, and constant visibility into what users are asking.

What companies get:

- Automatic learning from website content: Noupe reads your public pages and builds answers from what your site already says. No manual FAQ dumps. When content changes, the bot stays in sync. That’s essential in the chatbot future, where stale answers end trust fast.

- Instant setup, no coding: You add an embed code and it’s live. Teams that can launch, read real conversations, and adjust quickly usually get more value from AI chatbot development than teams stuck waiting on long development cycles.

- Custom knowledge base support: You can add your own documents or Q&A to keep answers accurate, especially for niche or regulated topics. That kind of control is what makes AI chatbot technology dependable instead of generic.

- Customization that affects trust: Size, placement, colors, avatar, tone. These decide whether users engage or bounce. Matching your site’s voice supports real chatbot use cases, and maintains consistency.

- Custom first message: Set expectations up front: what the bot handles, what it doesn’t, how to reach a human. Transparency beats cleverness in the conversational AI future.

- Real-time conversation delivery: Every chat lands in your inbox. You spot confusion early, fix gaps fast, and keep improvement lightweight, with no dashboard archaeology.

- Multi-language support: Automatic language detection and replies. No extra bots. No branching logic. This is table-stakes now.

Noupe doesn’t try to impress. It helps you keep a bot useful as expectations rise, which is what actually wins in the future of chatbots.

Where the future of chatbots ends up

If you step back from the tooling and the trend reports, the future of chatbots lands somewhere pretty ordinary.

The bots that survive aren’t the ones that tried to do everything. They’re the ones that handled one job cleanly, stayed accurate when things changed, and didn’t trap people in loops. You’ve probably felt the difference yourself. A bot that helps once earns a second chance. A bot that gets one answer wrong with confidence rarely does.

That’s why the chatbot future isn’t about pushing models harder. It’s about smoothing the rough edges. Fewer stale answers. Fewer handoffs that drop context. Fewer moments where someone gives up and thinks, “I’ll just email.”

If you’re deciding what to build next, don’t go big. Pick something obvious. Order tracking. Booking changes. Basic FAQs. Anchor it in content you already trust. Pay attention to what people actually type. Fix what breaks. Do it again. That loop beats ambition every time.